2019_IJCAI_Deep Adversarial Social Recommendation

[论文阅读笔记]2019_IJCAI_Deep Adversarial Social Recommendation

论文下载地址: https://www.ijcai.org/Proceedings/2019/0187.pdf

发表期刊:IJCAI

Publish time: 2019

作者及单位:

- Wenqi Fan1, Tyler Derr2, Yao Ma2, Jianping Wang1, Jiliang Tang2and Qing Li3

- 1Department of Computer Science, City University of Hong Kong

- 2Data Science and Engineering Lab, Michigan State University

- 3Department of Computing,The Hong Kong Polytechnic University

- wenqifan03@gmail.com, {derrtyle, mayao4}@msu.edu, jianwang@cityu.edu.hk, tangjili@msu.edu, csqli@comp.polyu.edu.hk

数据集: 正文中的介绍

- Ciao http://www.cse.msu.edu/∼tangjili/trust.html (文中作者给出的)

- Epinions http://www.cse.msu.edu/∼tangjili/trust.html (文中作者给出的)

代码:

其他:

其他人写的文章

- 对抗性社交推荐 (写的重点)

简要概括创新点: 2018_NACAL_KBGAN: Adversarial Learning for Knowledge Graph Embeddings将GANs与Knowledge graph embeddings(KGE)相结合,提高了KGE的效率,针对的就是negative smaple。本文就把Adversial Learning套在SoRec上,还是针对negative sample

- (1) In this paper, we present a Deep Adversarial SOcial recommendation model (DASO), which learns separated user representations in item domain and social domain. (在本文中,我们提出了一个深度对抗性社会推荐模型(DASO),该模型学习项目域和社会域中分离的用户表示。)

- (2) Particularly, we propose to transfer users’ information from social domain to item domain by using a bidirectional mapping method. (特别地,我们建议使用双向映射方法将用户的信息从社交领域转移到项目领域。)

- (3) In addition, we also introduce the adversarial learning to optimize our entire framework by generating informative negative samples. (此外,我们还引入了对抗式学习,通过生成信息丰富的负样本来优化我们的整个框架。)

- (4) 关于生成器和判别器,与通用的模型的作用是一样的。

Abstract

- Recent years have witnessed rapid developments on social recommendation techniques for improving the performance of recommender systems due to the growing influence of social networks to our daily life. The majority of existing social recommendation methods unify user representation for the user-item interactions (item domain) and user-user connections (social domain). (近年来,由于社交网络对我们日常生活的影响越来越大,为了提高推荐系统的性能,社交推荐技术得到了快速发展。现有的大多数社交推荐方法统一了用户项目交互(项目域)和用户连接(社交域)的用户表示。)

- However, it may restrain user representation learning in each respective domain, since users behave and interact differently in two domains, which makes their representations to be heterogeneous. (然而,由于用户在两个域中的行为和交互不同,这使得他们的表示具有异构性,因此它可能会限制每个域中的用户表示学习)

- In addition, most of traditional recommender systems can not efficiently optimize these objectives, since they utilize negative sampling technique which is unable to provide enough informative guidance towards the training during the optimization process. (此外,大多数传统的推荐系统不能有效地优化这些目标,因为它们使用负采样技术,无法为优化过程中的训练提供足够的信息指导。)

- In this paper, to address the aforementioned challenges, we propose a novel Deep Adversarial SOcial recommendation DASO. (我们提出了一个新的深度对抗性社会推荐DASO。)

- It adopts a bidirectional mapping method to transfer users’ information between social domain and item domain using adversarial learning. (它采用双向映射方法,通过对抗式学习在社交领域和项目领域之间传递用户信息。)

- Comprehensive experiments on two realworld datasets show the effectiveness of the proposed method.

1 Introduction

-

(1) In recent years, we have seen an increasing amount of attention on social recommendation, which harnesses social relations to boost the performance of recommender systems [Tang et al., 2016b; Fan et al., 2019; Wang et al., 2016]. Social recommendation is based on the intuitive ideas that people in the same social group are likely to have similar preferences, and that users will gather information from their experienced friends (e.g., classmates, relatives, and colleagues) when making decisions. Therefore, utilizing users’ social relations has been proven to greatly enhance the performance of many recommender systems [Ma et al., 2008; Fan et al., 2019; Tang et al., 2013b; 2016a]. (近年来,我们看到社会推荐越来越受到关注,它利用社会关系提升推荐系统的性能[Tang等人,2016b;Fan等人,2019;Wang等人,2016]。 社交推荐基于一种直观的想法,即同一社交群体中的人可能有相似的偏好,用户在做出决策时会从经验丰富的朋友(例如同学、亲戚和同事)那里收集信息。 因此,利用用户的社会关系已被证明能极大地提高许多推荐系统的性能[Ma等人,2008年;Fan等人,2019年;Tang等人,2013b;2016a]。)

-

(2) In Figure 1, we observe that in social recommendation we have both the item and social domains, which represent the user-item interactions and user-user connections, respectively. Currently, the most effective way to incorporate the social information for improving recommendations is when learning user representations, which is commonly achieved in ways such as, (在图1中,我们观察到,在社交推荐中,我们同时拥有条目和社交域,它们分别代表用户条目交互和用户-用户连接。目前,整合社交信息以改进推荐的最有效方法是 学习用户表示,这通常通过以下方式实现:)

- using trust propagation [Jamali and Ester, 2010], (使用信任传播)

- incorporating a user’s social neighborhood information [Fan et al., 2018], (合并用户的社交社区信息)

- or sharing a common user representation for the user-item interactions and social relations with a co-factorization method [Ma et al., 2008]. (或者使用协因子分解方法共享用户项交互和社会关系的公共用户表示)

-

However, as shown in Figure 1, although users bridge the gap between these two domains, their representations should be heterogeneous. This is because users behave and interact differently in the two domains. Thus, using a unified user representation may restrain user representation learning in each respective domain and results in an inflexible/limited transferring of knowledge from the social relations to the item domain. Therefore, one challenge is to learn separated user representations in two domains while transferring the information from the social domain to the item domain for recommendation. (然而,如图1所示,尽管用户在这两个域之间架起了桥梁,但他们的表示应该是异构的。 这是因为用户在这两个域中的行为和交互方式不同。因此,使用统一的用户表示可能会限制每个相应领域中的用户表示学习,并导致知识从社会关系到项目领域的不灵活/有限转移。因此,一个挑战是在将信息从社交领域转移到项目领域进行推荐的同时,学习两个领域中分离的用户表示。)

-

(3) In this paper, we adopt a nonlinear mapping operation to transfer user’s information from the social domain to the item domain, while learning separated user representations in the two domains. (在本文中,我们采用非线性映射操作将用户的信息从社交领域转移到项目领域,同时在这两个领域中学习分离的用户表示。)

-

Nevertheless, learning the representations is challenging due to the inherent data sparsity problem in both domains. Thus, to alleviate this problem, we propose to use a bidirectional mapping between the two domains, such that we can cycle information between them to progressively enhance the user’s representations in both domains. (然而,由于这两个领域都存在固有的数据稀疏性问题,因此学习这些表示具有挑战性。因此,为了缓解这个问题,我们建议在两个域之间使用双向映射,这样我们可以在它们之间循环信息,以逐步增强用户在两个域中的表示。)

-

However, for optimizing the user representations and item representations, most existing methods utilize the negative sampling technique, which is quite ineffective [Wang et al., 2018b]. This is due to the fact that during the beginning of the training process, most of the negative user-item samples are still within the margin to the real user-item samples, but later during the optimization process, negative sampling is unable to provide “difficult” and informative samples to further improve the user representations and item representations [Wang et al., 2018b; Cai and Wang, 2018]. Thus, it is desired to have samples dynamically generated throughout the training process to better guide the learning of the user representations and item representations. (然而,为了优化用户表示和项目表示,大多数现有方法都使用负采样技术,这是非常无效的[Wang等人,2018b]。这是因为在培训过程开始时,大多数负面用户项样本仍在与真实用户项样本的差距内,但在优化过程的后期,负抽样无法提供“困难”且信息丰富的样本,以进一步改善用户表征和项目表征[Wang等人,2018b;Cai和Wang,2018]。因此,希望在整个训练过程中 动态生成样本 ,以更好地指导用户表示和项目表示的学习。)

-

(4)Recently, Generative Adversarial Networks (GANs) [Goodfellow et al., 2014], which consists of two models to process adversarial learning, have shown great success across various domains due to their ability to learn an underlying data distribution and generate synthetic samples [Mao et al., 2017; 2018; Brock et al., 2019; Liu et al., 2018; Wang et al., 2017; 2018a; Derr et al., 2019]. (最近,生成性对抗网络(GANs) [Goodfello et al.,2014]由两个模型组成,用于处理对抗学习,由于其能够学习底层数据分布并生成合成样本,在各个领域取得了巨大成功)

- This is performed through the use of a generator and a discriminator. (这是通过使用生成器和鉴别器来实现的)

- The generator tries to generate realistic fake data samples to fool the discriminator, which distinguishes whether a given data sample is produced by the generator or comes from the real data distribution. (生成器试图生成真实的假数据样本来欺骗鉴别器,鉴别器可以区分给定的数据样本是由生成器生成的还是来自真实的数据分布。)

- A minimax game is played between the generator and discriminator, where this adversarial learning can train these two models simultaneously for mutual promotion. (在生成器和鉴别器之间玩一个极大极小博弈,这种对抗性学习可以同时训练这两个模型,以便相互促进。)

- In [Wang et al., 2018b] adversarial learning had been used to address the limitation of typical negative sampling.

- This is performed through the use of a generator and a discriminator. (这是通过使用生成器和鉴别器来实现的)

-

(5)Thus, we propose to harness adversarial learning in social recommendation to generate “difficult” negative samples to guide our framework in learning better user and item representations while further utilizing it to optimize our entire framework. (因此,我们建议在社会推荐中利用对抗性学习来生成“困难的”负面样本,以指导我们的框架学习更好的用户和项目表示,同时进一步利用它优化我们的整个框架。)

-

Our major contributions can be summarized as follows:

- We introduce a principled way to transfer users’ information from social domain to item domain using a bidirectional mapping method where we cycle information between the two domains to progressively enhance the user representations; (我们介绍了一种原则性的方法,使用双向映射方法将用户信息从社交领域转移到项目领域,在这两个领域之间循环信息,以逐步增强用户表示;)

- We propose a Deep Adversarial SOcial recommender system DASO, which can harness the power of adversarial learning to dynamically generate “difficult” negative samples, learn the bidirectional mappings between the two domains, and ultimately optimize better user and item representations; (我们提出了一个深度对抗性社会推荐系统DASO,它可以利用对抗性学习的力量动态生成==“困难”的负面样本==,学习两个领域之间的双向映射,最终优化更好的用户和项目表示;) and

- We conduct comprehensive experiments on two real-world datasets to show the effectiveness of the proposed model. (我们在两个真实数据集上进行了综合实验,以证明该模型的有效性。)

2 The Proposed Framework

- Let U={u1,u2,...,uN}\mathcal{U} = \{u_1, u_2, ..., u_N\}U={u1,u2,...,uN} and V={v1,v2,...,vM}\mathcal{V} = \{v_1, v_2, ..., v_M\}V={v1,v2,...,vM} denote the sets of users and items respectively,

- where N(M)N (M)N(M) is the number of users (items).

- We define user-item interactions matrix R∈RN×MR \in R^{N\times M}R∈RN×M from user’s implicit feedback,

- where the iii, jjj-th element ri,jr_{i,j}ri,j is 1 if there is an interaction (e.g., clicked/bought) between user uiu_iui and item vjv_jvj, and 0 other- wise.

- However, ri,j=1r_{i,j} = 1ri,j=1 does not mean user uiactually likes item vjv_jvj.

- Similarly, ri,j=0r_{i,j} = 0ri,j=0 does not mean uiu_iui does not like item vjv_jvj, since it can be that the user uiu_iui is not aware of the item vjv_jvj.

- where the iii, jjj-th element ri,jr_{i,j}ri,j is 1 if there is an interaction (e.g., clicked/bought) between user uiu_iui and item vjv_jvj, and 0 other- wise.

- The social network between users can be described by a matrix S∈RN×NS \in R^{N\times N}S∈RN×N,

- where si,j=1s_{i,j} = 1si,j=1 if there is a social relation between user uiu_iui and user uju_juj, and 0 otherwise.

- Given interactions matrix RRR and social network SSS, we aim to predict the unobserved entries (i.e., those where ri,j=0ri,j= 0ri,j=0) in RRR. (给定交互矩阵RRR和社交网络SSS,我们的目标是预测RRR中未观察到的条目(即ri,j=0r_{i,j}=0ri,j=0。)

2.1 An Overview of the Proposed Framework

-

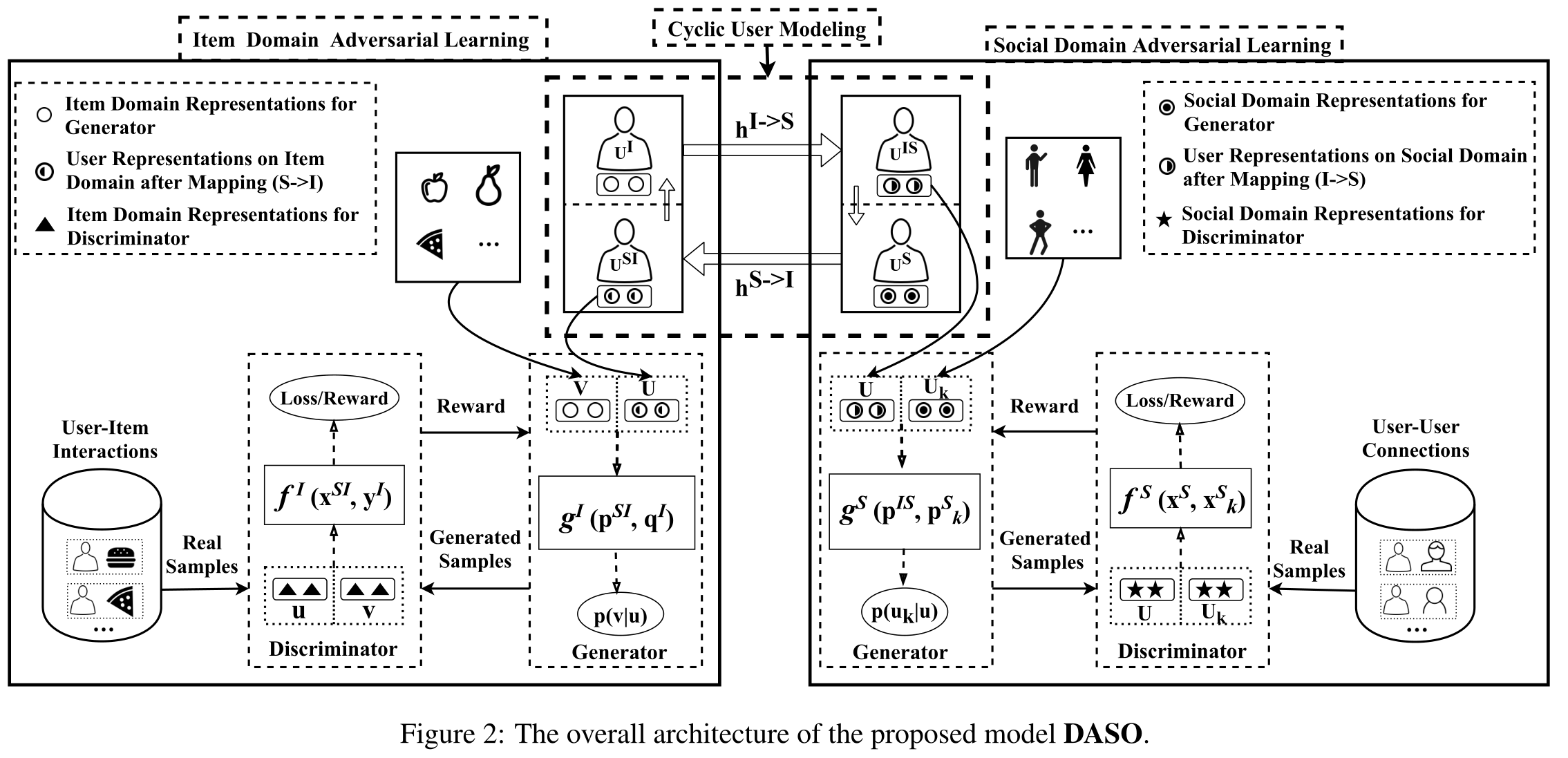

(1)The architecture of the proposed model is shown in Figure 2. The information is from two domains, which are the item domain III and the social domain SSS. (提出的模型的架构如图2所示。信息来自两个领域,即项目域I和社交域S)

- The model consists of three components: (该模型由三个部分组成)

- cyclic user modeling, (循环用户建模)

- item domain adversarial learning, (项目域对抗性学习)

- and social domain adversarial learning. (和社交域对抗性学习)

- The cyclic user modeling is to model user representations on two domains. (循环用户建模是在两个域上对用户表示进行建模)

- The item domain adversarial learning is to adopt the adversarial learning for dynamically generating “difficult” and informative negative samples to guide the learning of user and item representations. (项目域 对抗性学习 是采用对抗性学习 动态 生成 “困难” 且 信息丰富 的 负样本,以指导用户和项目表征的学习)

- The generator is utilized to ‘sample’ (recommend) items for each user and output user-item pairs as fake samples; (生成器 用于为每个用户“采样”(推荐)项目,并将用户项目对作为假样本输出)

- the other is the discriminator, which distinguishes the user-item pair samples sampled from the real user-item interactions from the generated user-item pair samples. (另一个是 鉴别器 ,它将从真实用户项交互中采样的用户项对样本与生成的用户项对样本区分开来)

- The social domain adversarial learning also similarly consists of a generator and a discriminator. (社交领域的对抗性学习同样由一个生成器和一个鉴别器组成)

- The model consists of three components: (该模型由三个部分组成)

-

(2)There are four types of representations in the two domains.

- In the item domain III, we have two types of representations including

- item domain representations of the generator (piI∈Rdp^I_ i\in R^dpiI∈Rd for user uiu_iui and qjI∈Rdq^I_j \in R^dqjI∈Rd for item vjv_jvj),

- and the item domain representations of the discriminator (xiI∈Rdx^I_i \in R^dxiI∈Rd for user uiu_iui and yjI∈Rdy^I_j \in R^dyjI∈Rd for item vjv_jvj).

- Social domains SSS also contains two types of representations including

- the social domain representations of the generator (piS∈Rdp^S_i \in R^dpiS∈Rd for user uiu_iui),

- and the social domain representations of the discriminator (xiS∈Rdx^S_i \in R^dxiS∈Rd for user uiu_iui).

- In the item domain III, we have two types of representations including

2.2 Cyclic User Modeling

- Cyclic user modeling aims to learn a relation between the user representations in the item domain III and the social domain SSS. As shown in the top part of Figure 2, ((循环用户建模旨在了解项目域I和社交域S中的用户表示之间的关系。如图2顶部所示)

- we first adopt a nonlinear mapping operation, denoted as hS→Ih^{S\to I}hS→I, to transfer user’s information from the social domain to the item domain, while learning separated user representations in the two domains. (我们首先采用非线性映射操作,表示为hS→Ih^{S\to I}hS→I将用户信息从社交领域转移到项目领域,同时学习两个领域中的独立用户表示)

- Then, a bidirectional mapping between these two domains (achieved by including another nonlinear mapping hI→Sh^{I→S}hI→S) is utilized to help cycle the information between them to progressively enhance the user representations in both domains. (然后,这两个域之间的双向映射(通过包含另一个非线性映射hI→Sh^{I\to S}hI→S实现) 用于帮助循环它们之间的信息,以逐步增强两个域中的用户表示。)

2.2.1 Transferring Social Information to Item Domain

-

(1)In social networks, a person’s preferences can be influenced by their social interactions, suggested by sociologists [Fan et al., 2019; 2018; Wasserman and Faust, 1994]. Therefore, a user’s social relations from the social network should be incorporated into their user representation in the item domain. (社会学家建议,在社交网络中,一个人的偏好可能会受到他们的社交互动的影响[Fan等人,2019年;2018年;瓦瑟曼和浮士德,1994年]。因此,来自社交网络的用户社交关系应该被纳入他们在项目域中的用户表示中。)

-

(2)We propose to adopt nonlinear mapping operation to transfer user’s information from the social domain to the item domain. (我们建议采用非线性映射操作将用户信息从社交领域转移到项目领域。)

- More specifically, the user representation on social domain piSp^S_ipiS is transferred to the item domain via a Multi-Layer Perceptron (MLP) denoted as hS→Ih^{S\to I}hS→I.

- The transferred user representation from social domain is denoted as piSIp^{SI}_ipiSI. (从社交领域转移的用户表示被表示为)

- More formally, the nonlinear mapping is as follows: piSI=hS→I(piS)=WL⋅(⋅⋅⋅a(W2⋅a(W1⋅piS+b1)+b2)...)+bLp^{SI}_i = h^{S\to I}(p^S_i) = W_L·(· · ·a(W_2·a(W_1·p^S_i + b_1) + b_2). . .) + b_LpiSI=hS→I(piS)=WL⋅(⋅⋅⋅a(W2⋅a(W1⋅piS+b1)+b2)...)+bL,

- where the WsW_sWs, bsbsbs are the weights and biases for the layers of the neural network having LLL layers,

- and aaa is a nonlinear activation function.

2.2.2 Bidirectional Mapping with Cycle Reconstruction

-

(1)As user-item interactions and user-user connections are often very sparse, learning separated user representations is challenging. (由于用户项交互和用户-用户连接通常非常稀疏,因此学习分离的用户表示是一项挑战。)

- Therefore, to partially alleviate this issue, we propose to utilize a bidirectional mapping between the two domains, such that we can cycle information between them to progressively enhance the user representations in both domains. (因此,为了部分缓解这个问题,我们建议利用两个域之间的双向映射,这样我们可以在它们之间循环信息,以逐步增强两个域中的用户表示。)

- To achieve this, another nonlinear apping operation, denoted as hI→Sh^{I\to S}hI→S, is adopted to transfer information from the item domain to the social domain: piIS=hI→S(piI)p^{IS}_i = h^{I\to S}(p^I_i)piIS=hI→S(piI), which has the same network structure as the hS→Ih^{S\to I}hS→I.

-

(2)This Bidirectional Mapping allows knowledge to be transferred between item and social domains. To learn these mappings, we further introduce cycle reconstruction. Its intuition is that transferred knowledge in the target domain should be reconstructed to the original knowledge in the source domain. Next we will elaborate cycle reconstruction. (这种双向映射允许知识在项目和社交领域之间转移。为了学习这些映射,我们进一步引入循环重构。它的直觉是,目标领域中转移的知识应该重建为源领域中的原始知识。接下来我们将详细介绍循环重建。)

-

(3)For user uiu_iui’s item domain representation piIp^I_ipiI, the user representation with cycle reconstruction should be able to map piIp^I_ipiI back to the original domain, as follows, piI⟶hI→S(piI)⟶hS→I(hI→S(piI))≈piIp^I_i \longrightarrow h^{I\to S}(p^I_i) \longrightarrow h^{S\to I}(h^{I\to S}(p^I_i)) \approx p^I_ipiI⟶hI→S(piI)⟶hS→I(hI→S(piI))≈piI.

- Likewise, for user uiu_iui’s social domain representation piSp^S_ipiS, the user representation with cycle reconstruction can also bring piSp^S_ipiS back to the original domain: piS⟶hS→I(piI)⟶hI→S(hS→I(piS))≈piS.p^S_i \longrightarrow h^{S\to I}(p^I_i) \longrightarrow h^{I\to S}(h^{S\to I}(p^S_i)) ≈ p^S_i.piS⟶hS→I(piI)⟶hI→S(hS→I(piS))≈piS.

-

(4)We can formulate this procedure using a cycle reconstruction loss, which needs to be minimized, as follows,

2.3 Item Domain Adversarial Learning

-

(1)To address the limitation of negative sampling for recommendation on the ranking task, we propose to harness adversarial learning to generate “difficult” and informative samples to guide the framework in learning better user and item representations in the item domain. As shown in the bottom left part of Figure 2, the adversarial learning on item domain consists of two components: (为了解决负采样在排名任务中的局限性,我们建议利用对抗性学习生成“困难”且信息丰富的样本,以指导框架更好地学习项目域中的用户和项目表示。如图2左下角所示,项目领域的对抗性学习由两个部分组成:)

- Discriminator DI(ui,v;ϕDI)D^I(u_i, v; \phi^I_D)DI(ui,v;ϕDI), parameterized by ϕDI\phi^I_DϕDI, aims to distinguish the real user-item pairs (ui,v)(u_i, v)(ui,v) and the user-item pairs generated by the generator. (旨在区分真正的用户项对(ui,v)(u_i, v)(ui,v)以及生成器生成的用户项对。)

- Generator GI(v∣ui;θGI)G^I(v|u_i; \theta^I_G)GI(v∣ui;θGI), parameterized by θGI\theta^I_GθGI, tries to fit the underlying real conditional distribution prealI(v∣ui)p^I_{real}(v|u_i)prealI(v∣ui) as much as possible, and generates (or, to be more precise, selects) the most relevant items to a given user uiu_iui. (尝试拟合底层实条件分布prealI(v∣ui)p^I_{real}(v|u_i)prealI(v∣ui) 并生成(或者更准确地说,选择)与给定用户uiu_iui最相关的项 .)

-

(2)Formally, DID^IDI and GIG^IGI are playing the following two-player minimax game with value function LadvI(GI,DI)L^I_{adv}(G^I, D^I)LadvI(GI,DI), (形式上,DID^IDI 和 GIG^IGI正在玩下面的两层极小极大值游戏)

2.3.1 Item Domain Discriminator Model

- (1)Discriminator DID^IDI aims to distinguish real user-item pairs (i.e., real samples) and the generated “fake” samples. (鉴别器DID^IDI旨在区分真实用户项目对(即真实样本)和生成的“假”样本。)

- The discriminator DID^IDI estimates the probability of item vjv_jvj being relevant (bought or clicked) to a given user uiu_iui using the sigmoid function and a score function fφDIIf^I_{φ^I_D}fφDII sa follows: (鉴别器DID^IDI使用sigmoid函数和score函数估计项目vjv_jvj的概率与给定用户uiu_iui相关(购买或点击)的概率)

- (2)Given real samples and generated fake samples, the objective for the discriminator DID^IDI is to maximize the log-likelihood of assigning the correct labels to both real and generated samples. The discriminator can be optimized by minimizing the objective in eq. (1) with the generator fixed using stochastic gradient methods. (给定真实样本和生成的假样本,鉴别器DID^IDI的目标是最大化为真实样本和生成样本分配正确标签的对数可能性。利用随机梯度法,在生成器固定的情况下,可通过最小化等式(1)中的目标来优化鉴别器。)

2.3.2 Item Domain Generator Model

-

(1) On the other hand, the purpose of the generator GIG^IGI is to approximate the underlying real conditional distribution prealI(v∣ui)p^I_{real}(v|u_i)prealI(v∣ui), and generate the most relevant items for any given user uiu_iui. (另一方面,生成器GIG^IGI的目的是近似底层实条件分布prealI(v∣ui)p^I_{real}(v|u_i)prealI(v∣ui), 并为任何给定用户uiu_iui生成最相关的项目.)

-

(2) We define the generator using the softmax function over all the items according to the transferred user representation piSIp^{SI}_ipiSI from social domain to item domain: (我们根据从社交域到项目域传输的用户表示piSIp^{SI}_ipiSI,在所有项上使用softmax函数定义生成器)

- where gθGIIg^I_{\theta^I_G}gθGII is a score function reflecting the chance of vjv_jvj being clicked/purchased by uiu_iui. Given a user uiu_iui, an item vjv_jvj can be sampled from the distribution GI(vj∣ui;θGI)G^I(v_j | u_i; θ^I_G)GI(vj∣ui;θGI).

-

(3) We note that the process of generating a relevant item for a given user is discrete. Thus, we cannot optimize the generator GIG^IGI via stochastic gradient descent methods [Wang et al., 2017]. Following [Sutton et al., 2000; Schulman et al., 2015], we adopt the policy gradient method usually adopted in reinforcement learning to optimize the generator. (我们注意到,为给定用户生成相关项的过程是离散的。因此,我们无法通过随机梯度下降法优化发生器GIG^IGI[Wang等人,2017]。继[Sutton等人,2000年;Schulman等人,2015年]之后,我们采用 强化学习 中通常采用的策略梯度法来优化生成器。)

-

(4) To learn the parameters for the generator, we need to perform the following minimization problem: (为了了解生成器的参数,我们需要执行以下最小化问题:)

-

(5) Now, this problem can be viewed in a reinforcement learning setting, where K(xiI,yjI)=log(1+exp(fϕDII(xiI,yjI)))K(x^I_i, y^I_j) = log(1 + exp(f^I_{\phi^I_D}(x^I_i, y^I_j)))K(xiI,yjI)=log(1+exp(fϕDII(xiI,yjI))) is the reward given to the action “selecting viv_ivi given a user uiu_iui” performed according to the policy probability GI(v∣ui)G^I(v|u_i)GI(v∣ui). The policy gradient can be written as: (现在,这个问题可以在强化学习环境中查看)

- Specially, the gradient ▽θGILadvI(GI,DI)\bigtriangledown_{\theta^I_G}\mathcal{L}^I_{adv}(G^I, D^I)▽θGILadvI(GI,DI) is an expected summation over the gradients ▽θGIlogGI(vj∣ui)\bigtriangledown_{\theta^I_G}logG^I(v_j | u_i)▽θGIlogGI(vj∣ui) weighted by log(1+exp(fϕDII(xiI,yjI)))log(1 + exp(f^I_{\phi^I_D}(x^I_i, y^I_j)))log(1+exp(fϕDII(xiI,yjI))).

-

(6) The optimal parameters of GIG^IGI and DID^IDI can be learned by alternately minimizing and maximizing the value function LadvI(GI,DI)L^I_{adv}(G^I, D^I)LadvI(GI,DI). (通过交替最小化和最大化值函数LadvI(GI,DI)L^I_{adv}(G^I, D^I)LadvI(GI,DI),可以学习GIG^IGI和DID^IDI的最佳参数. )

- In each iteration, discriminator DID^IDI is trained with real samples from prealI(⋅∣ui)p^I_{real}(\cdot | u_i)prealI(⋅∣ui) and generated samples from generator GIG^IGI; (在每一次迭代中,鉴别器DID^IDI都是用来自prealI(⋅∣ui)p^I_{real}(\cdot | u_i)prealI(⋅∣ui)的真实样本和从生成器GIG^IGI生成的样本 训练)

- the generator GIG^IGI is updated with policy gradient under the guidance of DID^IDI. (生成器GIG^IGI在DID^IDI的指导下使用策略梯度进行更新)

-

(7) Note that different from the way of optimizing user and item representations with the typical negative sampling on traditional recommender systems, the adversarial learning technique tries to generate “difficult” and high-quality negative samples to guide the learning of user and item representations. (请注意,与传统推荐系统上典型的负采样优化用户和项目表示不同,对抗学习技术试图生成“困难”和高质量的负采样,以指导用户和项目表示的学习。)

2.4 Social Domain Adversarial Learning

-

(1) In order to learn better user representations from the social perspective, another adversarial learning is harnessed in the social domain. Likewise, the adversarial learning in the social domain consists of two components, as shown in the bottom right part of Figure 2. (为了从社交角度学习更好的用户表示,在社交领域中还利用了另一种对抗性学习。同样,社交领域中的对抗性学习由两部分组成,如图2右下部分所示。)

-

Discriminator DS(ui,u;ϕDS)D^S(u_i, u; \phi^S_D)DS(ui,u;ϕDS), parameterized by ϕDS\phi^S_DϕDS, aims to distinguish the real connected user-user pairs (ui,u)(u_i, u)(ui,u) and the fake user-user pairs generated by the generator GSG^SGS. (旨在区分真正的连接用户对(ui,u)(u_i, u)(ui,u)以及生成器GSG^SGS生成的假用户对。)

-

Generator GS(u∣ui;θGS)G^S(u | u_i; \theta^S_G)GS(u∣ui;θGS), parameterized by θGS\theta^S_GθGS, tries to fit the underlying real conditional distribution prealS(u∣ui)p^S_{real}(u|ui)prealS(u∣ui) as much as possible, and generates (or, to be more precise, selects) the most relevant users to the given user uiu_iui. (尝试拟合底层实条件分布p^S_{real}(u|ui)p real S (u)∣用户界面),并生成(或者更准确地说,选择)与给定用户u_i最相关的用户 .)

-

-

(2) Formally, DSD^SDS and GSG^SGS are playing the following two-player minimax game with value function LadvS(GS,DS)\mathcal{L}^S_{adv}(G^S, D^S)LadvS(GS,DS), (正在进行下面的两层极小极大值游戏)

2.4.1 Social Domain Discriminator

- The discriminator DSD^SDS aims to distinguish the real user-user pairs and the generated ones. The discriminators DSD^SDS estimates the probability of user uku_kuk being connected to user uiu_iui with a sigmoid function and a score function fϕDSSf^S_{\phi^S_D}fϕDSS as follows:

2.4.2 Social Domain Generator

-

(1) The purpose of the generator, GSG^SGS, is to approximate the underlying real conditional distribution prealS(u∣ui)p^S_{real}(u | u_i)prealS(u∣ui), and generate (or, to be more precise, select) the most relevant users for any given user uiu_iui. (生成器GSG^SGS的目的是近似基本的真实条件分布prealS(u∣ui)p^S_{real}(u | u_i)prealS(u∣ui),并为任何给定用户uiu_iui生成(或者更准确地说,选择)最相关的用户)

-

(2) We model the distribution using a softmax function over all the other users with the transferred user representation piISp^{IS}_ipiIS (from the item to social domain), (我们使用softmax函数对所有其他用户的分布进行建模,并使用传输的用户表示 piISp^{IS}_ipiIS(从项目域到社交域))

- where gθGSSg^S_{\theta^S_G}gθGSSis a score function reflecting the chance of uku_kuk being related to uiu_iui. (是一个分数函数,反映了uku_kuk被与uiu_iui有联系的机会)

-

(3) Likewise, policy gradient is utilized to optimize the generator GSG^SGS, (同样,策略梯度被用来优化生成器GSG^SGS)

-

where the details are omitted here, since it is defined similar to Eq.(5).

2.5 The Objective Function

-

(1) With all model components, the objective function of the proposed framework is: (对于所有模型组件,提出的框架的目标函数为:)

- where λ\lambdaλ is to control the relative importance of cyclereconstruction strategy and further influences the two mapping operation. (其中λ\lambdaλ用于控制施工策略的相对重要性,并进一步影响两个映射操作。)

- hS⟶Ih^{S\longrightarrow I}hS⟶I and hI⟶Sh^{I\longrightarrow S}hI⟶S are implemented as MLP with three hidden layers. (实现为具有三个隐藏层的MLP)

- To optimize the objective, the RMSprop [Tieleman and Hinton, 2012] is adopted as the optimizer in our implementation. (为了优化目标,我们的实现采用了RMSprop[Tieleman and Hinton,2012]作为优化器。)

- To train our model, at each training epoch, we iterate over the training set in mini-batch to train each model (e.g., GI) while the parameters of other models (e.g.,DID^IDI, GSG^SGS, DSD^SDS) are fixed. (为了训练我们的模型,在每个训练阶段,我们以小批量迭代训练集来训练每个模型(例如GI),而其他模型(例如DID^IDI, GSG^SGS, DSD^SDS)的参数是固定的。)

- When the training is finished, we take the representations learned by the generator GIG^IGI and GSG^SGS as our final representations of item and user for performing recommendation. ( 培训结束后,我们将生成器GIG^IGI和GSG^SGS学习到的表示作为项目和用户的最终表示,以执行推荐。)

-

(2) There are six representations in our model, including piIp^I_ipiI, qjIq^I_jqjI, xiIx^I_ixiI, yjIy^I_jyjI, piSp^S_ipiS, xiSx^S_ixiS. They are randomly initialized and jointly learned during the training stage. (在我们的模型中有六个表示,包括piIp^I_ipiI, qjIq^I_jqjI, xiIx^I_ixiI, yjIy^I_jyjI, piSp^S_ipiS, xiSx^S_ixiS它们在训练阶段被随机初始化并联合学习。)

-

(3) Following the setting of IRGAN [Wang et al., 2017], we adopt the inner product as the score function fφDIIf^I_{φ^I_D}fφDII and gθGIIg^I_{\theta^I_G}gθGII in the item domain as follows: fϕDII(xiI,yjI)=(xiI)TyjI+ajf^I_{\phi^I_D}(x^I_i,y^I_j) = {(x^I_i)}^Ty^I_j+ a_jfϕDII(xiI,yjI)=(xiI)TyjI+aj, gθGII(piSI,qjI)=(piSI)TqjI+bjg^I_{\theta^I_G}(p^{SI}_i, q^I_j) = {(p^{SI}_i)}^Tq^I_j+ b_jgθGII(piSI,qjI)=(piSI)TqjI+bj, (按照IRGAN[Wang等人,2017]的设置,我们采用内积作为项目域中的得分函数fφDIIf^I_{φ^I_D}fφDII和gθGIIg^I_{\theta^I_G}gθGII,如下所示)

- where aja_jaj and bjb_jbj are the bias term for item jjj.

- We define the score function fϕDSSf^S_{\phi^S_D}fϕDSS and gθGSSg^S_{\theta^S_G}gθGSS in the social domain in a similar way. (在社交领域,以类似的方式定义了分数函数fϕDSSf^S_{\phi^S_D}fϕDSS and gθGSSg^S_{\theta^S_G}gθGSS)

- Note that the above score functions can be also implemented using deep neural networks, but leave this investigation as one future work. (请注意,上述评分函数也可以使用深度神经网络实现,但将此研究留作未来工作。)

3 Experiments

3.1 Experimental Settings

- (1) We conduct our experiments on two representative datesets Ciao and Epinions1 for the Top-K recommendation. (我们在两个具有代表性的数据集Ciao和Epinions1上进行实验,以获得Top-K推荐。)

- As these two datasets provide users’ explicit ratings on items, we convert them into 1(Both Ciao and Epinions datasets are available at: http://www.cse.msu.edu/∼tangjili/trust.html) as the implicit feedback. This processing method is widely used in previous works on recommendation with implicit feedback [Rendle et al., 2009]. (由于这两个数据集提供了用户对项目的显式评分,我们将其转换为1作为隐式反馈。这种处理方法被广泛应用于之前关于隐性反馈推荐的工作中[Rendle等人,2009]。)

- We randomly split the user-item interactions of each dataset into training set (80%) to learn the parameters, validation set (10%) to tune hyper-parameters, and testing set (10%) for the final performance comparison. We implemented our method with tensorflow and tuned all the hyper-parameters with grid-search [Fan et al., 2019]. The statistics of these two datasets are presented in Table 1. (我们将每个数据集的用户项交互随机分为训练集(80%)学习参数,验证集(10%)调整超参数,测试集(10%)进行最终性能比较。我们使用tensorflow实现了我们的方法,并使用网格搜索调整了所有超参数[Fan等人,2019]。这两个数据集的统计数据如表1所示。)

- We use two popular performance metrics for Top-K recommendation [Wang et al., 2017]: (我们在Top-K推荐中使用了两种流行的性能指标[Wang等人,2017]:Precision@K以及归一化贴现累积收益(NDCG@K))

- Precision@K

- and Normalized Discounted Cumulative Gain (NDCG@K).

- We set K as 3, 5, and 10. Higher values of the Precision@K and NDCG@K indicate better predictive performance. (.我们将K设为3、5和10。更高的Precision@K和NDCG@K显示更好的预测性能。)

Baselines

- (2) To evaluate the performance, we compared our proposed model DASO with four groups of representative baselines, including (为了评估性能,我们将我们提出的DASO模型与四组具有代表性的基线进行了比较,包括)

- traditional recommender system without social network information (BPR [Rendle et al., 2009]),

- tradition social recommender systems (SBPR [Zhao et al., 2014] and SocialMF [Jamali and Ester, 2010]),

- deep neural networks based social recommender systems (DeepSoR [Fan et al., 2018] and GraphRec [Fan et al., 2019]),

- and adversarial learning based recommender system (IRGAN [Wang et al., 2017]).

- Some of the original baseline implementations (SocialMF, DeepSoR, and GraphRec) are for rating prediction on recommendations. Therefore we adjust their objectives to point-wise prediction with sigmoid cross entropy loss using negative sampling. (一些原始的基线实现(SocialMF、DeepSoR和GraphRec)用于对建议进行评级预测。因此,我们调整了他们的目标,采用==负采样的sigmoid交叉熵损失==进行逐点预测。)

3.2 Performance Comparison of Recommender Systems

Table 2 presents the performance of all recommendation methods. We have the following findings: (表2给出了所有推荐方法的性能。我们有以下发现:)

- SBPR and SocialMF outperform BPR. SBPR and SocialMF utilize both user-item interactions and social relations; while BPR only uses the user-item interactions. These improvements show the effectiveness of incorporating social relations for recommender systems. (SBPR和Socialf的表现优于BPR。SBPR和SocialMF同时利用用户项交互和社会关系;而BPR只使用用户项交互。这些改进显示了将社会关系纳入推荐系统的有效性。)

- In most cases, the two deep models, DeepSoR and GraphRec, obtain better performance than SBPR and SocialMF, which are odeled with shallow architectures. These improvements reflect the power of deep architectures on the task of recommendations. (在大多数情况下,DeepSoR和GraphRec这两个深层模型比SBPR和SocialMF(它们是用浅层架构建模的)获得更好的性能。这些改进反映了深层架构对建议任务的强大作用。)

- IRGAN achieves much better performance than BPR, while both of them utilize the user-item interactions only. IRGAN adopts the adversarial learning to optimize user and item representations; while BPR is a pair-wise ranking framework for Top-K traditional recommender systems. This suggests that adopting adversarial learning can provide more informative negative samples and thus improve the performance of the model. (IRGAN实现了比BPR更好的性能,而两者都只利用用户项交互。IRGAN采用对抗式学习来优化用户和项目表示;而BPR是Top-K传统推荐系统的成对排序框架。这表明采用对抗性学习可以提供更多信息量的负面样本,从而提高模型的性能。)

- Our model DASO consistently outperforms all the baselines. Compared with DeepSoR and GraphRec, our model proposes advanced model components to model user representations in both item domain and social domain. (我们的DASO模型始终优于所有基线。与DeepSoR和GraphRec相比,我们的模型提出了高级模型组件来对项目域和社交域中的用户表示进行建模。)

- In addition, our model harnesses the power of adversarial learning to generate more informative negative samples, which can help learn better user and item representations. (此外,我们的模型利用对抗性学习的力量生成更多信息量的负面样本,这有助于学习更好的用户和项目表示。)

3.2.1 Parameter Analysis

Next, we investigate how the value of λ\lambdaλ affects the performance of the proposed framework. (接下来,我们研究λ\lambdaλ的值如何影响所提出框架的性能。)

- The value of λ\lambdaλ is to control the importance of cycle reconstruction. Figure 3 shows the performance with varied values of λ\lambdaλ using Precision@3 as the measurement. The performance first increases as the value of λ\lambdaλ gets larger and then starts to decrease once λ goes beyond 100. (λ\lambdaλ的值用于控制循环重建的重要性。图3显示了使用不同的λ\lambdaλ值时的性能Precision@3作为衡量标准。性能首先随着λ\lambdaλ的值变大而增加,然后在λ\lambdaλ超过100时开始降低。)

- The performance weakly depends on the parameter controlling the bidirectional influence, which suggests that transferring user’s information from the social domain to the item domain already significantly boosts the performance. (性能弱地依赖于控制双向影响的参数,这表明将用户信息从社交域转移到项目域已经显著提高了性能。)

- However, the user-item interactions and user-user connections are often very sparse, so the bidirectional mapping (Cycle Reconstruction) is proposed to help alleviate this data sparsity problem. Although the performance weakly depends on the bidirectional influence, we still observe that we can learn better user’s representation in both domains. (然而,用户项交互和用户连接通常非常稀疏,因此提出了双向映射(循环重构)来帮助缓解这种数据稀疏问题。虽然性能弱地依赖于双向影响,但我们仍然观察到,我们可以在这两个域中学习更好的用户表示。)

4 Related Work

-

(1) As suggested by the social theories [Marsden and Friedkin, 1993], people’s behaviours tend to be influenced by their social connections and interactions. Many existing social recommendation methods [Fan et al., 2018; Tang et al., 2013a; 2016b; Du et al., 2017; Ma et al., 2008] have shown that incorporating social relations can enhance the performance of the recommendations. (正如社会理论[Marsden and Friedkin,1993]所指出的那样,人们的行为往往会受到社会关系和互动的影响。许多现有的社会推荐方法[Fan et al.,2018;Tang et al.,2013a;2016b;Du et al.,2017;Ma et al.,2008]表明,融入社会关系可以提高推荐的绩效。)

-

In addition, deep neural networks have been adopted to enhance social recommender systems. (此外,深度神经网络已被用于增强社会推荐系统)

- DLMF [Deng et al., 2017] utilizes deep auto-encoder to initialize vectors for matrix factorization. (DLMF[Deng等人,2017]利用深度自动编码器初始化矩阵分解的向量。)

- DeepSoR [Fan et al., 2018] utilizes deep neural networks to capture nonlinear user representations in social relations and integrate them into ((probabilistic matrix factorization** for prediction. (DeepSoR[Fan et al.,2018]利用深度神经网络捕捉社会关系中的非线性用户表示,并将其集成到概率矩阵分解中进行预测。)

- GraphRec [Fan et al., 2019] proposes a graph neural net-works framework for social recommendation, which aggregates both user-item interactions information and social interaction information when performing prediction. (GraphRec[Fan et al.,2019]提出了一个用于社会推荐的图神经网络框架,该框架在进行预测时聚合了用户项交互信息和社会交互信息。)

-

(2) Some recent works have investigated adversarial learning for recommendation.

- IRGAN [Wang et al., 2017] proposes to unify the discriminative model and generative model with adversarial learning strategy for item recommendation. (IRGAN[Wang等人,2017]提出将判别模型和生成模型与项目推荐的对抗性学习策略相统一。)

- NMRN-GAN [Wang et al., 2018b] introduces the adversarial learning with negative sampling for streaming recommendation. (NMRN-GAN[Wang等人,2018b]为流媒体推荐引入了带有负采样的对抗式学习。)

-

Despite the compelling success achieved by many works, little attention has been paid to social recommendation with adversarial learning. Therefore, we propose a deep adversarial social recommender system to fill this gap. (尽管许多作品取得了令人信服的成功,但很少有人关注具有对抗性学习的社会推荐。因此,我们提出了一个深度对抗的社会推荐系统来填补这一空白。)

5 Conclusion and Future Work

- (1) In this paper, we present a Deep Adversarial SOcial recommendation model (DASO), which learns separated user representations in item domain and social domain. (在本文中,我们提出了一个深度对抗性社会推荐模型(DASO),该模型学习项目域和社会域中分离的用户表示。)

- (2) Particularly, we propose to transfer users’ information from social domain to item domain by using a bidirectional mapping method. (特别地,我们建议使用双向映射方法将用户的信息从社交领域转移到项目领域。)

- (3) In addition, we also introduce the adversarial learning to optimize our entire framework by generating informative negative samples. (此外,我们还引入了对抗式学习,通过生成信息丰富的负样本来优化我们的整个框架。)

- Comprehensive experiments on two real-world datasets show the effectiveness of our model. The calculation of softmax function in item/social domain generator involves all items/users, which is time-consuming and computationally inefficient. (在两个真实数据集上的综合实验表明了该模型的有效性。在item/social domain generator中,softmax函数的计算涉及所有item/users,这既耗时又计算效率低下。)

- Therefore, hierarchical softmax [Morin and Bengio, 2005; Mikolov et al., 2013; Wang et al., 2018a], which is a replacement for softmax, would be considered to speed up the calculation in both generators in the future direction. (因此,替代softmax的分层softmax[Morin and Bengio,2005;Mikolov et al.,2013;Wang et al.,2018a]将被认为在未来的方向上加快两台生成器的计算速度。)

Acknowledgments

References

如若内容造成侵权/违法违规/事实不符,请联系编程学习网邮箱:809451989@qq.com进行投诉反馈,一经查实,立即删除!

相关文章

- java se 权限修饰符

权限修饰符 java一共提供了四个权限修饰符 public private protected default(默认的 可以不写) 权限: 1. 四个修饰符可以修饰类,方法,属性等2. 不同修饰符修饰类,方法,属性的时候。使用这些类的时候他们的权限是不…...

2024/4/14 16:58:53 - 【Python】07 《Effective Python》读书笔记

2022年1月份,寒假在家,从市图书馆借了Brett Slatkin的《Effective Python》(机械工业出版社,2016),记录下我认为有用的方法。 第1章 用Pythonic方式来思考 第7条 用列表推导来取代 map 和 filter 列表推…...

2024/4/18 9:31:34 - 初识前端以及学前端开发前的准备

简单了解前端 前端通俗讲很多人脱口而出就是做网页的,可以这么说但也不是那么准确。 前端即网站前台部分,运行在PC端,移动端等浏览器上展现给用户浏览的网页。随着互联网技术的发展,HTML5,CSS3,前端框架的应…...

2024/4/5 2:48:59 - 英语翻译——15

链接:Logs Stacking堆木头 | JXNUOJ 描述:大兴安岭出产大量木材。在把原木装上火车之前,伐木工会先把它们放在露天的某个地方。从侧面看,一个原木堆栈的图形是 如下: 我们已经知道,在至少一个日志中&…...

2024/4/5 2:48:59 - C++ Primer Plus 6th代码阅读笔记

C Primer Plus 6th代码阅读笔记 第一章没什么代码 第二章代码 carrots.cpp : cout 可以拼接输出,cin.get()接受输入 convert.cpp 函数原型放在主函数前,int stonetolb(int); 1 stone 14 pounds 一英石等于十四英镑cin.get( )会读取输入字符,…...

2024/5/6 6:49:05 - OpenJudge - 1490:A Knight‘s Journey

骑士厌倦了一次又一次地看到相同的黑白方块,并决定环游世界。每当骑士移动时,它都是一个方向上的两个正方形和一个垂直于此方向的正方形。骑士的世界就是他所生活的棋盘。我们的骑士住在一个棋盘上,这个棋盘的面积比普通的8 * 8棋盘小&#x…...

2024/4/13 13:52:29 - Kafka producer的事务和幂等性

背景:kafka 客户端之producer API发送消息以及简单源码分析 从Kafka 0.11开始,KafkaProducer又支持两种模式:幂等生产者和事务生产者。幂等生产者加强了Kafka的交付语义,从至少一次交付到精确一次交付。特别是生产者的重试将不再…...

2024/5/6 6:15:09 - 图腾的由来

如今的世界,还是科技领导着的时候,大家都用心来发展科技生产力。这些特性便是大家用共同的经历铸就而来的,遇强则强遇难而上永不退缩。...

2024/4/19 18:24:40 - 出圈-java

题目描述: 设有n个人围坐一圈并按顺时针方向从1到n编号,从第1个人开始进行1到m的报数,报数到第个m人,此人出圈,再从他的下一个人重新开始1到m的报数,如此进行下去直到所剩下一人为止。 输入: 输…...

2024/4/16 21:31:22 - Unity 如何延时运行或者重复运行函数?

1.Invoke(函数名称字符串,几秒种后执行); 这种调用方法只会执行一次。 2.InvokeRepeating(函数名称字符串,几秒钟后开始,间隔秒数);这种调用方法就像一个计时器,是执行多次的。 具体参考 https://jingyan.baidu.com/article/0eb457e5ccbf8f03f0a9057a.html...

2024/4/13 13:52:34 - build_process-webpack的构建流程

webpack的构建流程? 一、运行流程 webpack 的运行流程是一个串行的过程,它的工作流程就是将各个插件串联起来 在运行过程中会广播事件,插件只需要监听它所关心的事件,就能加入到这条webpack机制中,去改变webpack的运作…...

2024/4/14 1:30:40 - pytorch本地图片数据集加载成字典

from torchvision.transforms import transforms import pandas as pdfile_pathos.path.join(rE:\python存储\leaves\images) Image_list[] labels_list[] a{}for i in range(0,18352):image_pathos.path.join(rE:\python存储\leaves\images,{}.jpg.format(i))imageImage.open(…...

2024/4/13 13:52:54 - 如何改变晶振频率?

简 介: 本文就拆开的一个普通的晶体进行观察,构建了 Colpittz振荡电路,并对晶体表面留下记号笔痕迹后谐振频率的改变进行了测量。这种方法可以对晶体的谐振频率进行修改,反过来也可以用于测量微小质量的改变。 关键词:…...

2024/4/13 13:52:29 - java 重载、重写 构造函数详解

1、重写只能出现在继承关系之中。当一个类继承它的父类方法时,都有机会重写该父类的方法。一个特例是父类的方法被标识为final。重写的主要优点是能够定义某个子类型特有的行为。 复制代码 class Animal { public void eat(){ System.out.println ("Animal is …...

2024/4/19 22:18:10 - AcWing 1875. 贝茜的报复(数学+暴力枚举)

题目连接 https://www.acwing.com/problem/content/1877/ 思路 我们顺着不太好计算,所以我们反着计算,计算出所有满足条件的奇数个数,然后相乘就好了,复杂度为O(N4)O(N^4)O(N4), 代码 #include<bits/stdc.h> using nam…...

2024/4/13 13:52:34 - AcWing 1904. 奶牛慢跑(单调栈)

题目连接 https://www.acwing.com/problem/content/1906/ 思路 如果一个牛比前面的牛跑的还慢那么就可以把这两个牛合并,如果相同的话就不要合并了,所以我们最后构造的就是一个速度非严格单调递增的序列 #include<bits/stdc.h> using namespac…...

2024/4/7 21:28:43 - JavaScript 基础笔记(pink老师)-- 对象

JavaScript 基础笔记(pink老师)-- 对象...

2024/4/15 5:17:59 - 数学建模竞赛时间表(全年11场数模竞赛)

大家好,我是北海。今天整理了下常见的数模竞赛时间,按竞赛正常举办时间顺序排序。 1、美国大学生数学建模竞赛 主办方:美国数学及其应用联合会 竞赛时间:寒假期间,春节前后 2、MathorCup高校数学建模挑战赛 主办方…...

2024/4/13 13:53:29 - sqli-labs(Less62-65)布尔类型脚本

Less-62Less-63Less-64Less-65Less-62 import requests from lxml import etree""" Less-62布尔类型爆破脚本 改源码$times 13000,重置一下challenges数据库,然后启动程序,包没下先pip下载 原理是按照payload循环字典,根据响应的长度&am…...

2024/4/16 8:40:42 - (未学习)从零开始学习C++ Day 016

本科郑州大学应用化学系,研究生福州大学物理化学,后从事营地教育两年,现在跨专业考研至计算机。 写这个文章记录的初衷是希望通过这样的方式来监督自己每日学习一定量的编程保持练习,虽然初试的成绩还未出。但只要有一线希望自然…...

2024/4/20 12:12:31

最新文章

- CGAL 网格简化

文章目录 一、简介二、实现代码三、实现效果参考资料一、简介 为了提高网格处理的效率,通常需要将过于冗长的3D数据集简化为更简洁而又真实的表示。尽管从几何压缩到逆向工程有许多应用,但简洁地捕捉表面的几何形状仍然是一项乏味的任务。CGAL中则为我们提供了一种通过变分几…...

2024/5/6 10:13:29 - 梯度消失和梯度爆炸的一些处理方法

在这里是记录一下梯度消失或梯度爆炸的一些处理技巧。全当学习总结了如有错误还请留言,在此感激不尽。 权重和梯度的更新公式如下: w w − η ⋅ ∇ w w w - \eta \cdot \nabla w ww−η⋅∇w 个人通俗的理解梯度消失就是网络模型在反向求导的时候出…...

2024/5/6 9:38:23 - 学习鸿蒙基础(11)

目录 一、Navigation容器 二、web组件 三、video视频组件 四、动画 1、属性动画 .animation() 2、 转场动画 transition() 配合animateTo() 3、页面间转场动画 一、Navigation容器 Navigation组件一般作为页面的根容器,包括单页面、分栏和自适应三种显示模式…...

2024/5/4 11:54:31 - [Godot] 3D拾取

CollisionObject3D文档 Camera3D文档 CollisionObject3D有个信号_input_event,可以用于处理3D拾取。 Camera3D也有project_position用于将屏幕空间坐标投影到3D空间。 extends Node3D#是否处于选中状态 var selected : bool false #摄像机的前向量 var front : V…...

2024/5/1 23:57:37 - 【外汇早评】美通胀数据走低,美元调整

原标题:【外汇早评】美通胀数据走低,美元调整昨日美国方面公布了新一期的核心PCE物价指数数据,同比增长1.6%,低于前值和预期值的1.7%,距离美联储的通胀目标2%继续走低,通胀压力较低,且此前美国一季度GDP初值中的消费部分下滑明显,因此市场对美联储后续更可能降息的政策…...

2024/5/4 23:54:56 - 【原油贵金属周评】原油多头拥挤,价格调整

原标题:【原油贵金属周评】原油多头拥挤,价格调整本周国际劳动节,我们喜迎四天假期,但是整个金融市场确实流动性充沛,大事频发,各个商品波动剧烈。美国方面,在本周四凌晨公布5月份的利率决议和新闻发布会,维持联邦基金利率在2.25%-2.50%不变,符合市场预期。同时美联储…...

2024/5/4 23:54:56 - 【外汇周评】靓丽非农不及疲软通胀影响

原标题:【外汇周评】靓丽非农不及疲软通胀影响在刚结束的周五,美国方面公布了新一期的非农就业数据,大幅好于前值和预期,新增就业重新回到20万以上。具体数据: 美国4月非农就业人口变动 26.3万人,预期 19万人,前值 19.6万人。 美国4月失业率 3.6%,预期 3.8%,前值 3…...

2024/5/4 23:54:56 - 【原油贵金属早评】库存继续增加,油价收跌

原标题:【原油贵金属早评】库存继续增加,油价收跌周三清晨公布美国当周API原油库存数据,上周原油库存增加281万桶至4.692亿桶,增幅超过预期的74.4万桶。且有消息人士称,沙特阿美据悉将于6月向亚洲炼油厂额外出售更多原油,印度炼油商预计将每日获得至多20万桶的额外原油供…...

2024/5/6 9:21:00 - 【外汇早评】日本央行会议纪要不改日元强势

原标题:【外汇早评】日本央行会议纪要不改日元强势近两日日元大幅走强与近期市场风险情绪上升,避险资金回流日元有关,也与前一段时间的美日贸易谈判给日本缓冲期,日本方面对汇率问题也避免继续贬值有关。虽然今日早间日本央行公布的利率会议纪要仍然是支持宽松政策,但这符…...

2024/5/4 23:54:56 - 【原油贵金属早评】欧佩克稳定市场,填补伊朗问题的影响

原标题:【原油贵金属早评】欧佩克稳定市场,填补伊朗问题的影响近日伊朗局势升温,导致市场担忧影响原油供给,油价试图反弹。此时OPEC表态稳定市场。据消息人士透露,沙特6月石油出口料将低于700万桶/日,沙特已经收到石油消费国提出的6月份扩大出口的“适度要求”,沙特将满…...

2024/5/4 23:55:05 - 【外汇早评】美欲与伊朗重谈协议

原标题:【外汇早评】美欲与伊朗重谈协议美国对伊朗的制裁遭到伊朗的抗议,昨日伊朗方面提出将部分退出伊核协议。而此行为又遭到欧洲方面对伊朗的谴责和警告,伊朗外长昨日回应称,欧洲国家履行它们的义务,伊核协议就能保证存续。据传闻伊朗的导弹已经对准了以色列和美国的航…...

2024/5/4 23:54:56 - 【原油贵金属早评】波动率飙升,市场情绪动荡

原标题:【原油贵金属早评】波动率飙升,市场情绪动荡因中美贸易谈判不安情绪影响,金融市场各资产品种出现明显的波动。随着美国与中方开启第十一轮谈判之际,美国按照既定计划向中国2000亿商品征收25%的关税,市场情绪有所平复,已经开始接受这一事实。虽然波动率-恐慌指数VI…...

2024/5/4 23:55:16 - 【原油贵金属周评】伊朗局势升温,黄金多头跃跃欲试

原标题:【原油贵金属周评】伊朗局势升温,黄金多头跃跃欲试美国和伊朗的局势继续升温,市场风险情绪上升,避险黄金有向上突破阻力的迹象。原油方面稍显平稳,近期美国和OPEC加大供给及市场需求回落的影响,伊朗局势并未推升油价走强。近期中美贸易谈判摩擦再度升级,美国对中…...

2024/5/4 23:54:56 - 【原油贵金属早评】市场情绪继续恶化,黄金上破

原标题:【原油贵金属早评】市场情绪继续恶化,黄金上破周初中国针对于美国加征关税的进行的反制措施引发市场情绪的大幅波动,人民币汇率出现大幅的贬值动能,金融市场受到非常明显的冲击。尤其是波动率起来之后,对于股市的表现尤其不安。隔夜美国股市出现明显的下行走势,这…...

2024/5/6 1:40:42 - 【外汇早评】美伊僵持,风险情绪继续升温

原标题:【外汇早评】美伊僵持,风险情绪继续升温昨日沙特两艘油轮再次发生爆炸事件,导致波斯湾局势进一步恶化,市场担忧美伊可能会出现摩擦生火,避险品种获得支撑,黄金和日元大幅走强。美指受中美贸易问题影响而在低位震荡。继5月12日,四艘商船在阿联酋领海附近的阿曼湾、…...

2024/5/4 23:54:56 - 【原油贵金属早评】贸易冲突导致需求低迷,油价弱势

原标题:【原油贵金属早评】贸易冲突导致需求低迷,油价弱势近日虽然伊朗局势升温,中东地区几起油船被袭击事件影响,但油价并未走高,而是出于调整结构中。由于市场预期局势失控的可能性较低,而中美贸易问题导致的全球经济衰退风险更大,需求会持续低迷,因此油价调整压力较…...

2024/5/4 23:55:17 - 氧生福地 玩美北湖(上)——为时光守候两千年

原标题:氧生福地 玩美北湖(上)——为时光守候两千年一次说走就走的旅行,只有一张高铁票的距离~ 所以,湖南郴州,我来了~ 从广州南站出发,一个半小时就到达郴州西站了。在动车上,同时改票的南风兄和我居然被分到了一个车厢,所以一路非常愉快地聊了过来。 挺好,最起…...

2024/5/4 23:55:06 - 氧生福地 玩美北湖(中)——永春梯田里的美与鲜

原标题:氧生福地 玩美北湖(中)——永春梯田里的美与鲜一觉醒来,因为大家太爱“美”照,在柳毅山庄去寻找龙女而错过了早餐时间。近十点,向导坏坏还是带着饥肠辘辘的我们去吃郴州最富有盛名的“鱼头粉”。说这是“十二分推荐”,到郴州必吃的美食之一。 哇塞!那个味美香甜…...

2024/5/4 23:54:56 - 氧生福地 玩美北湖(下)——奔跑吧骚年!

原标题:氧生福地 玩美北湖(下)——奔跑吧骚年!让我们红尘做伴 活得潇潇洒洒 策马奔腾共享人世繁华 对酒当歌唱出心中喜悦 轰轰烈烈把握青春年华 让我们红尘做伴 活得潇潇洒洒 策马奔腾共享人世繁华 对酒当歌唱出心中喜悦 轰轰烈烈把握青春年华 啊……啊……啊 两…...

2024/5/4 23:55:06 - 扒开伪装医用面膜,翻六倍价格宰客,小姐姐注意了!

原标题:扒开伪装医用面膜,翻六倍价格宰客,小姐姐注意了!扒开伪装医用面膜,翻六倍价格宰客!当行业里的某一品项火爆了,就会有很多商家蹭热度,装逼忽悠,最近火爆朋友圈的医用面膜,被沾上了污点,到底怎么回事呢? “比普通面膜安全、效果好!痘痘、痘印、敏感肌都能用…...

2024/5/5 8:13:33 - 「发现」铁皮石斛仙草之神奇功效用于医用面膜

原标题:「发现」铁皮石斛仙草之神奇功效用于医用面膜丽彦妆铁皮石斛医用面膜|石斛多糖无菌修护补水贴19大优势: 1、铁皮石斛:自唐宋以来,一直被列为皇室贡品,铁皮石斛生于海拔1600米的悬崖峭壁之上,繁殖力差,产量极低,所以古代仅供皇室、贵族享用 2、铁皮石斛自古民间…...

2024/5/4 23:55:16 - 丽彦妆\医用面膜\冷敷贴轻奢医学护肤引导者

原标题:丽彦妆\医用面膜\冷敷贴轻奢医学护肤引导者【公司简介】 广州华彬企业隶属香港华彬集团有限公司,专注美业21年,其旗下品牌: 「圣茵美」私密荷尔蒙抗衰,产后修复 「圣仪轩」私密荷尔蒙抗衰,产后修复 「花茵莳」私密荷尔蒙抗衰,产后修复 「丽彦妆」专注医学护…...

2024/5/4 23:54:58 - 广州械字号面膜生产厂家OEM/ODM4项须知!

原标题:广州械字号面膜生产厂家OEM/ODM4项须知!广州械字号面膜生产厂家OEM/ODM流程及注意事项解读: 械字号医用面膜,其实在我国并没有严格的定义,通常我们说的医美面膜指的应该是一种「医用敷料」,也就是说,医用面膜其实算作「医疗器械」的一种,又称「医用冷敷贴」。 …...

2024/5/4 23:55:01 - 械字号医用眼膜缓解用眼过度到底有无作用?

原标题:械字号医用眼膜缓解用眼过度到底有无作用?医用眼膜/械字号眼膜/医用冷敷眼贴 凝胶层为亲水高分子材料,含70%以上的水分。体表皮肤温度传导到本产品的凝胶层,热量被凝胶内水分子吸收,通过水分的蒸发带走大量的热量,可迅速地降低体表皮肤局部温度,减轻局部皮肤的灼…...

2024/5/4 23:54:56 - 配置失败还原请勿关闭计算机,电脑开机屏幕上面显示,配置失败还原更改 请勿关闭计算机 开不了机 这个问题怎么办...

解析如下:1、长按电脑电源键直至关机,然后再按一次电源健重启电脑,按F8健进入安全模式2、安全模式下进入Windows系统桌面后,按住“winR”打开运行窗口,输入“services.msc”打开服务设置3、在服务界面,选中…...

2022/11/19 21:17:18 - 错误使用 reshape要执行 RESHAPE,请勿更改元素数目。

%读入6幅图像(每一幅图像的大小是564*564) f1 imread(WashingtonDC_Band1_564.tif); subplot(3,2,1),imshow(f1); f2 imread(WashingtonDC_Band2_564.tif); subplot(3,2,2),imshow(f2); f3 imread(WashingtonDC_Band3_564.tif); subplot(3,2,3),imsho…...

2022/11/19 21:17:16 - 配置 已完成 请勿关闭计算机,win7系统关机提示“配置Windows Update已完成30%请勿关闭计算机...

win7系统关机提示“配置Windows Update已完成30%请勿关闭计算机”问题的解决方法在win7系统关机时如果有升级系统的或者其他需要会直接进入一个 等待界面,在等待界面中我们需要等待操作结束才能关机,虽然这比较麻烦,但是对系统进行配置和升级…...

2022/11/19 21:17:15 - 台式电脑显示配置100%请勿关闭计算机,“准备配置windows 请勿关闭计算机”的解决方法...

有不少用户在重装Win7系统或更新系统后会遇到“准备配置windows,请勿关闭计算机”的提示,要过很久才能进入系统,有的用户甚至几个小时也无法进入,下面就教大家这个问题的解决方法。第一种方法:我们首先在左下角的“开始…...

2022/11/19 21:17:14 - win7 正在配置 请勿关闭计算机,怎么办Win7开机显示正在配置Windows Update请勿关机...

置信有很多用户都跟小编一样遇到过这样的问题,电脑时发现开机屏幕显现“正在配置Windows Update,请勿关机”(如下图所示),而且还需求等大约5分钟才干进入系统。这是怎样回事呢?一切都是正常操作的,为什么开时机呈现“正…...

2022/11/19 21:17:13 - 准备配置windows 请勿关闭计算机 蓝屏,Win7开机总是出现提示“配置Windows请勿关机”...

Win7系统开机启动时总是出现“配置Windows请勿关机”的提示,没过几秒后电脑自动重启,每次开机都这样无法进入系统,此时碰到这种现象的用户就可以使用以下5种方法解决问题。方法一:开机按下F8,在出现的Windows高级启动选…...

2022/11/19 21:17:12 - 准备windows请勿关闭计算机要多久,windows10系统提示正在准备windows请勿关闭计算机怎么办...

有不少windows10系统用户反映说碰到这样一个情况,就是电脑提示正在准备windows请勿关闭计算机,碰到这样的问题该怎么解决呢,现在小编就给大家分享一下windows10系统提示正在准备windows请勿关闭计算机的具体第一种方法:1、2、依次…...

2022/11/19 21:17:11 - 配置 已完成 请勿关闭计算机,win7系统关机提示“配置Windows Update已完成30%请勿关闭计算机”的解决方法...

今天和大家分享一下win7系统重装了Win7旗舰版系统后,每次关机的时候桌面上都会显示一个“配置Windows Update的界面,提示请勿关闭计算机”,每次停留好几分钟才能正常关机,导致什么情况引起的呢?出现配置Windows Update…...

2022/11/19 21:17:10 - 电脑桌面一直是清理请关闭计算机,windows7一直卡在清理 请勿关闭计算机-win7清理请勿关机,win7配置更新35%不动...

只能是等着,别无他法。说是卡着如果你看硬盘灯应该在读写。如果从 Win 10 无法正常回滚,只能是考虑备份数据后重装系统了。解决来方案一:管理员运行cmd:net stop WuAuServcd %windir%ren SoftwareDistribution SDoldnet start WuA…...

2022/11/19 21:17:09 - 计算机配置更新不起,电脑提示“配置Windows Update请勿关闭计算机”怎么办?

原标题:电脑提示“配置Windows Update请勿关闭计算机”怎么办?win7系统中在开机与关闭的时候总是显示“配置windows update请勿关闭计算机”相信有不少朋友都曾遇到过一次两次还能忍但经常遇到就叫人感到心烦了遇到这种问题怎么办呢?一般的方…...

2022/11/19 21:17:08 - 计算机正在配置无法关机,关机提示 windows7 正在配置windows 请勿关闭计算机 ,然后等了一晚上也没有关掉。现在电脑无法正常关机...

关机提示 windows7 正在配置windows 请勿关闭计算机 ,然后等了一晚上也没有关掉。现在电脑无法正常关机以下文字资料是由(历史新知网www.lishixinzhi.com)小编为大家搜集整理后发布的内容,让我们赶快一起来看一下吧!关机提示 windows7 正在配…...

2022/11/19 21:17:05 - 钉钉提示请勿通过开发者调试模式_钉钉请勿通过开发者调试模式是真的吗好不好用...

钉钉请勿通过开发者调试模式是真的吗好不好用 更新时间:2020-04-20 22:24:19 浏览次数:729次 区域: 南阳 > 卧龙 列举网提醒您:为保障您的权益,请不要提前支付任何费用! 虚拟位置外设器!!轨迹模拟&虚拟位置外设神器 专业用于:钉钉,外勤365,红圈通,企业微信和…...

2022/11/19 21:17:05 - 配置失败还原请勿关闭计算机怎么办,win7系统出现“配置windows update失败 还原更改 请勿关闭计算机”,长时间没反应,无法进入系统的解决方案...

前几天班里有位学生电脑(windows 7系统)出问题了,具体表现是开机时一直停留在“配置windows update失败 还原更改 请勿关闭计算机”这个界面,长时间没反应,无法进入系统。这个问题原来帮其他同学也解决过,网上搜了不少资料&#x…...

2022/11/19 21:17:04 - 一个电脑无法关闭计算机你应该怎么办,电脑显示“清理请勿关闭计算机”怎么办?...

本文为你提供了3个有效解决电脑显示“清理请勿关闭计算机”问题的方法,并在最后教给你1种保护系统安全的好方法,一起来看看!电脑出现“清理请勿关闭计算机”在Windows 7(SP1)和Windows Server 2008 R2 SP1中,添加了1个新功能在“磁…...

2022/11/19 21:17:03 - 请勿关闭计算机还原更改要多久,电脑显示:配置windows更新失败,正在还原更改,请勿关闭计算机怎么办...

许多用户在长期不使用电脑的时候,开启电脑发现电脑显示:配置windows更新失败,正在还原更改,请勿关闭计算机。。.这要怎么办呢?下面小编就带着大家一起看看吧!如果能够正常进入系统,建议您暂时移…...

2022/11/19 21:17:02 - 还原更改请勿关闭计算机 要多久,配置windows update失败 还原更改 请勿关闭计算机,电脑开机后一直显示以...

配置windows update失败 还原更改 请勿关闭计算机,电脑开机后一直显示以以下文字资料是由(历史新知网www.lishixinzhi.com)小编为大家搜集整理后发布的内容,让我们赶快一起来看一下吧!配置windows update失败 还原更改 请勿关闭计算机&#x…...

2022/11/19 21:17:01 - 电脑配置中请勿关闭计算机怎么办,准备配置windows请勿关闭计算机一直显示怎么办【图解】...

不知道大家有没有遇到过这样的一个问题,就是我们的win7系统在关机的时候,总是喜欢显示“准备配置windows,请勿关机”这样的一个页面,没有什么大碍,但是如果一直等着的话就要两个小时甚至更久都关不了机,非常…...

2022/11/19 21:17:00 - 正在准备配置请勿关闭计算机,正在准备配置windows请勿关闭计算机时间长了解决教程...

当电脑出现正在准备配置windows请勿关闭计算机时,一般是您正对windows进行升级,但是这个要是长时间没有反应,我们不能再傻等下去了。可能是电脑出了别的问题了,来看看教程的说法。正在准备配置windows请勿关闭计算机时间长了方法一…...

2022/11/19 21:16:59 - 配置失败还原请勿关闭计算机,配置Windows Update失败,还原更改请勿关闭计算机...

我们使用电脑的过程中有时会遇到这种情况,当我们打开电脑之后,发现一直停留在一个界面:“配置Windows Update失败,还原更改请勿关闭计算机”,等了许久还是无法进入系统。如果我们遇到此类问题应该如何解决呢࿰…...

2022/11/19 21:16:58 - 如何在iPhone上关闭“请勿打扰”

Apple’s “Do Not Disturb While Driving” is a potentially lifesaving iPhone feature, but it doesn’t always turn on automatically at the appropriate time. For example, you might be a passenger in a moving car, but your iPhone may think you’re the one dri…...

2022/11/19 21:16:57